The quality of modern software is increasingly determining the success of technical products. At the same time, complexity is on the rise—particularly in embedded systems used in safety-critical fields such as electric mobility, industrial automation, and medical technology. Traditional testing methods are increasingly reaching their limits. This is precisely where a new approach is gaining importance: AI-powered test case generation.

Instead of defining test cases exclusively by hand, this approach uses existing test data and expands it with the help of machine learning. The goal is not only efficiency but, above all, better coverage of complex system states—that is, precisely the situations in which errors are particularly difficult to detect.

Development processes already generate large amounts of test data today. This data comes from various sources: automated tests, integration tests, and real-world field trials. While this data contains valuable information about a system’s behavior, it is often used only for immediate analysis.

The DeepTest research project by ITPower Solutions takes a different approach: it uses this data as the basis for a learning system capable of generating new test cases. The central question here is whether new and realistic tests can be derived from existing test runs.

To make this possible, test data is not viewed merely as simple measurement values. Instead, the AI interprets them as temporal signal traces—that is, as patterns that evolve over time. Alternatively, these traces can also be viewed as visual representations, similar to the waveforms on an oscilloscope. Both perspectives complement each other and make it possible to identify complex relationships that remain hidden when individual values are considered in isolation.

A key aspect of AI-driven test case generation is pattern recognition. Technical systems contain numerous implicit rules that determine their behavior. For example, if one signal is triggered, another signal must follow after a certain delay. In many cases, multiple signals must run synchronously or influence one another.

Such dependencies are often only incompletely described in traditional tests. Rather, they arise implicitly within the system and are reflected in existing test data. This is precisely where machine learning comes into play: the models learn these relationships from the data itself—without them having to be explicitly specified.

This also means that test cases are no longer derived exclusively from specifications, but from real-world system behavior. This shifts the focus from manual design to data-driven test case generation.

To identify these patterns and generate new test data, various machine learning techniques are employed. Generative models, which can generate new data rather than merely analyzing existing data, are particularly relevant.

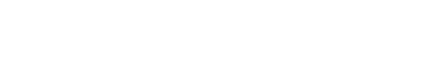

So-called Generative Adversarial Networks (GANs) play a central role. These consist of two competing neural networks: while one network generates new data, the other evaluates its quality. This competition results in the generated data becoming increasingly realistic over time. In practice, Wasserstein GANs in particular have proven to be stable and powerful, as they detect differences between real and generated data more precisely (Figure 1).

In addition, variational autoencoders (VAEs) are used, which compress data and then reconstruct it. In doing so, they learn the essential structures of the data and can generate new variants from them. A third approach involves so-called Wavenets, which were developed specifically for time series and extrapolate signal trends based on past values.

What is interesting here is that the models initially do not recognize a classic distinction between a system’s inputs and outputs. They merely examine signal trajectories. Only afterward are the generated data divided back into inputs and expected responses—resulting in complete test cases.

A key advancement of this approach lies in the evaluation of the generated test data. While many AI applications rely on subjective or visual assessments, the project developed a quantitative evaluation approach.

In this process, known signal rules are formally described and the generated data is then checked to ensure it adheres to these rules. The quality of a generated test dataset can thus be determined using measurable criteria, such as the proportion of correctly reproduced patterns.

This objective evaluation is particularly important because it is what truly makes the use of AI in the testing process reliable. Without traceable metrics, the practical application of such methods would be difficult to justify

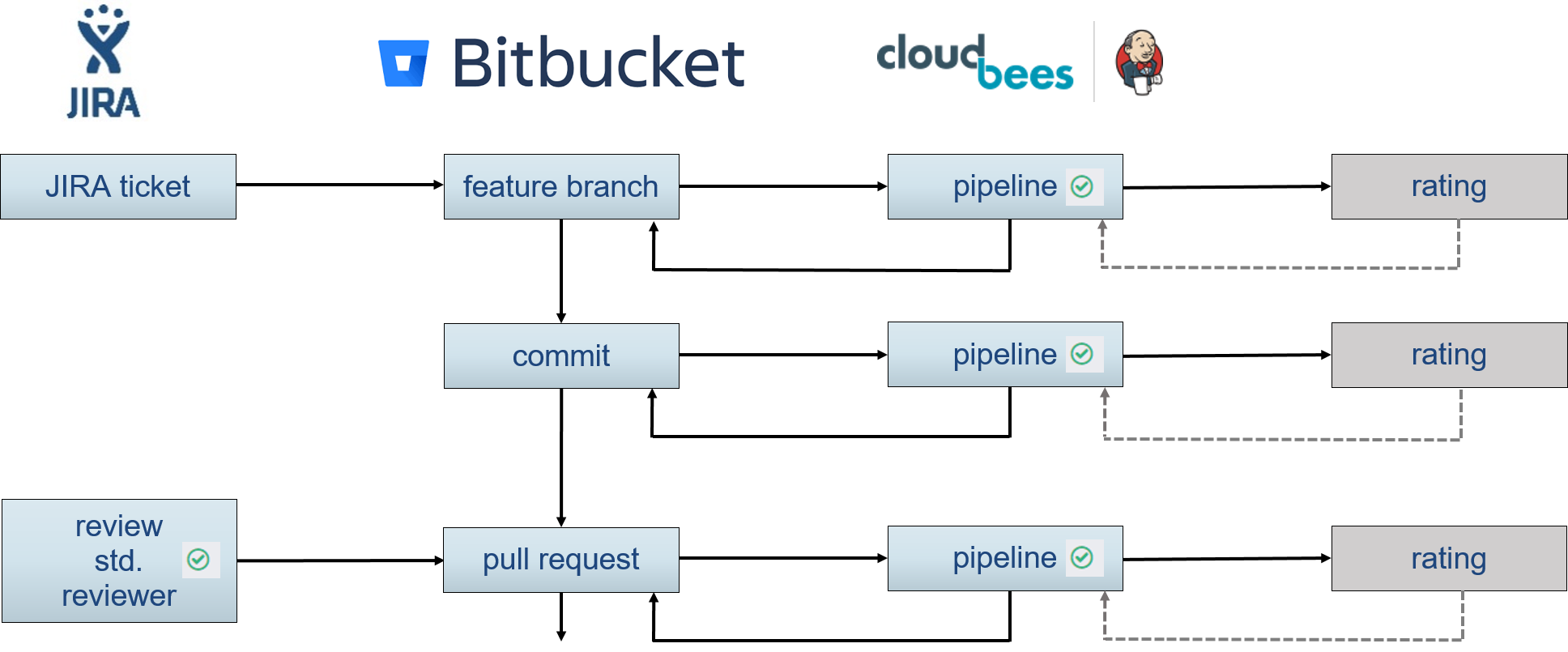

A major advantage of AI-powered test generation lies in its integration into existing continuous integration processes. Test data generated as part of the development process can be used directly to improve the models.

This results in a self-learning system that improves with every iteration. New code changes not only lead to new tests but also expand the AI’s knowledge base. Test generation thus becomes a continuous process that dynamically adapts to the system (Figure 2).

The results to date show that AI-assisted test generation works in principle and offers genuine added value. Wasserstein GANs, in particular, deliver convincing results and achieve a high degree of alignment with defined signal rules. Wavenets also show promising results, especially in modeling temporal dependencies.

A notable aspect is that the generated data is visually almost indistinguishable from real test data. Differences only become apparent through quantitative analysis—which underscores the importance of objective evaluation methods.

Despite the promising results, AI-assisted test generation is not a panacea. A key issue is the dependence on existing data. The quality of the generated tests depends directly on the quality and diversity of the training data. If certain scenarios are not included in the data, they cannot be reliably generated by the AI either.

Another problem is that not all relevant patterns are known to the system or can be clearly defined. While AI can learn implicit relationships, their interpretation remains partially opaque. This makes it difficult to verify the results, particularly in safety-critical areas.

Even the evaluation of the generated data is not trivial, despite quantitative approaches. The defined metrics cover only a portion of potential quality aspects. There remains a risk that generated tests may be formally correct but still fail to provide relevant new insights.

Added to this is the technical effort involved. The development, training, and integration of such models require specialized expertise as well as the appropriate infrastructure. For many companies, this initially presents a barrier to entry.

AI-powered test generation opens up new possibilities for testing complex systems. It enables the efficient use of existing test data to derive new, realistic test cases. This can lead to a significant improvement in test coverage, particularly in areas with high system complexity.

At the same time, it is clear that the approach must be used with care. The quality of the results depends heavily on the underlying data, the models used, and the evaluation methods. AI does not replace traditional testing, but rather complements it effectively.

However, companies that embrace this approach early on gain a clear competitive advantage. This is because the ability to intelligently automate and continuously improve testing processes is increasingly becoming a decisive factor in software development.

I am your sales representative and will be happy to advise you on all questions relating to our services and products! Get in touch or simply make an appointment for a free consultation call.

E-Mail: sebastian.stritz@itpower.de

Telefon: +49 (0)30 6098501-17