At ITPower Solutions, we used automated module and system testing to validate a medical device for mechanical cardiac support in a customer project. In this safety-critical environment, high standards of quality and reliability are naturally required. Testing components and the overall system is therefore an important part of the development process and quality assurance.

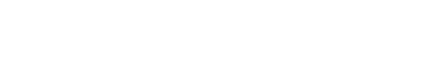

The cardiac support system developed in the project consisted of several modules: communication mediators, control computers, drives, control systems (subcomponents of the drive), and chargers (Figure 1). The test object communicates with its environment via approximately 30 hardware signals, 5 serial interfaces, and 2 buses (CAN and I²C). The test strategy consisted of testing the various modules individually, in combination, and then the system as a whole.

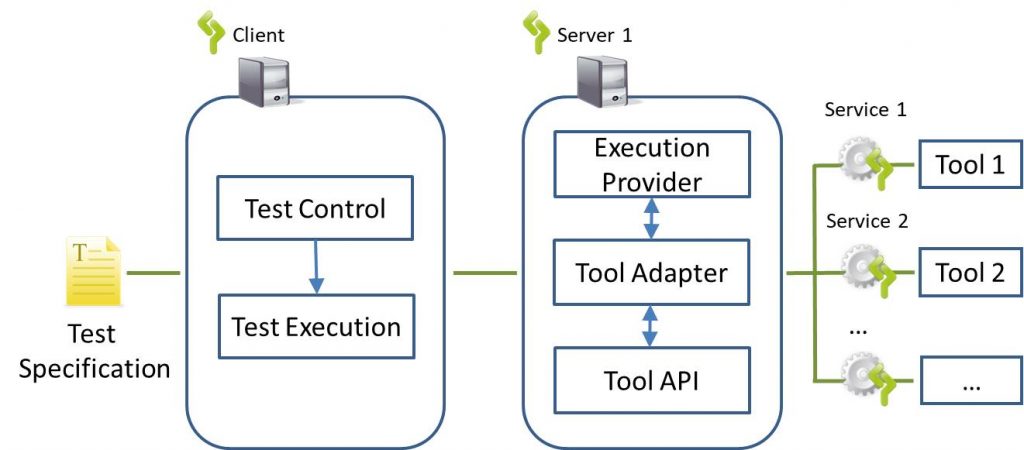

ContinoProva is based on a client-server architecture. It integrates various hardware and software tools for automated testing. ContinoProva is ideal for use cases in which tools already used for manual testing are to be integrated into the testing environment and reused. This allows the experience and expertise of employees in using the tools to continue to be leveraged. This architecture makes it possible to abstract from individual user and programming interfaces and use a uniform front end for test specification and tool control. This allows the user to specify and automatically execute tests across tool boundaries.

During a test run with ContinoProva, services are requested step by step from individual integrated tools in accordance with the test specification defined in the front end, e.g., sending bus messages via the bus tool or reading signals at the output of the test object via the oscilloscope. Any number of servers, services, and tools can be integrated. The client-server architecture facilitates signal exchange between and control of connected tools. The local distribution of the tools offers considerable advantages in terms of performance during test execution (Figure 2).

This architecture requires that the connected tools have appropriate application program interfaces (API). ContinoProva does not require any specific form of test specification. It is able to execute the test steps defined by the test specification sequentially and feed the execution results back into the test sequence.

ContinoProva Client and Server each consist of individual software modules. The client contains a module called Test Control, which takes the data from the current test step of the test specification and generates an executable code unit from it. In another module called Test Execution, the tools involved in a specific test step are coordinated.

The separation of Test Control and Test Execution makes it easier to adapt the implementation to different types of test specifications. The Test Execution module is matched on the server side by the Execution Provider module. To enable easy interchangeability of tools, communication with the respective tool API is encapsulated in an extra module called Tool Adapter.

Testing the modules of the test object required dividing the entire system to be tested into functionally meaningful units. In order to test the functionality of a module, its interfaces were connected to the test environment and a model was created that simulates the environment of the test object with a sufficient degree of abstraction.

Adapters, known as services, were developed to connect the modules to be tested to ContinoProva. They integrate external tools into ContinoProva and make their functions, such as stimulating or reading interfaces, available in the form of services. The integration of external tools via services made it possible to implement and adapt module-specific adapters quickly and with little effort. This provided a flexible test environment that could be used for multiple modules.

Another advantage was the ability to reuse a test specification in different test environment constellations with different external tools. This is made possible by carefully defining the interfaces and skillfully structuring and nesting generic and tool-specific services. In this case, different bus simulators that read and write CAN messages were used for CAN bus communication.

A hardware-in-the-loop (HiL) system from dSpace and other external components such as PCAN and controllable relay boxes were used for the system test. These were connected to ContinoProva via services so that the ContinoProva user interface could be used as the front end for the test specification. As with the module test, functions that would have had to be simulated in the environment model were implemented in ContinoProva services. The battery simulation implemented in ContinoProva for various states of charge and other battery properties proved to be valuable at this point. This significantly reduced the test runtime and saved test resources.

It became apparent that the service implementation required less effort than developing or expanding the environment model in the HiL environment and was therefore the more efficient solution. Parts of the environment model were developed in modular services using easy-to-understand programming languages (C# and .NET). The test environment was thus up and running in a short time. The encapsulation of tool-specific adapters in services also enabled easy testability, a high reuse rate of test artifacts, and simple error analysis. A total of approximately 10 services were developed for the project to implement interfaces and simulate the behavior of the environment.

Feedback from product developers: Berlin Heart’s developers and testers benefited from the ease of use and comprehensibility of the test specification format, meaning that only a short training period was necessary before productive testing could begin.

Sensible demarcation between module and environment: A precise analysis of the module and its interfaces is necessary in order to set sensible boundaries between the module and its environment. This influences the number and types of external interfaces of the test object.

Simulation vs. implementation: Test automation inevitably raises the question of which parts of the test system should be simulated and which should be implemented using (real) systems. The answer to this question must take into account the fact that implemented systems can increase cross-effects. This can make it more difficult to analyze the causes of errors when they occur.

First module tests, then meaningful integration tests: Testing at different test levels is necessary in order to detect and locate errors at an early stage. Integration tests should be based on a well-considered integration strategy that is adapted to the test objective and test object.

I am your sales representative and will be happy to advise you on all questions relating to our services and products! Get in touch or simply make an appointment for a free consultation call.

Sebastian Stritz

E-Mail: sebastian.stritz@itpower.de

Phone: +49 (0)30 6098501-17