Artificial intelligence has long been an integral part of modern applications. From driver-assistance systems to personalized advertising and automated lending, AI-based processes shape numerous areas of life and business. However, as AI becomes more widespread, the importance of a key quality issue also grows: Bias in Artificial Intelligence.

Biases in data and models can cause AI systems to systematically make incorrect or discriminatory decisions. This poses a significant risk, particularly in regulated sectors such as finance. At the same time, regulatory requirements—such as those outlined in the EU AI Act—are intensifying the need not only to avoid bias but also to verifiably control it.

The key to addressing this lies in testing. However, traditional testing methods are insufficient. A systematic, statistically sound approach is required—one that ITPower Solutions develops and implements.

A particularly relevant application area for bias in AI is creditworthiness assessment. AI-supported processes are already widely used here—both in retail banking and, increasingly, in the small and medium-sized enterprise (SME) sector.

The benefits are clear: large volumes of data can be processed efficiently, decisions are made faster, and processes become more scalable.

At the same time, new questions arise. If a loan application is rejected, the question of the rationale arises. Since AI systems are based on statistical correlations, this rationale is often not immediately transparent.

The key point is this: the quality of the decision depends directly on the training data. If this data is incomplete or biased—for example, due to the underrepresentation of certain groups—it has a direct impact on the result.

Minorities and rare cases are particularly affected, as they occur less frequently in random samples. This can lead to the AI performing significantly worse in precisely these cases.

The issue of bias in AI is also clearly addressed in regulations.

The EU AI Act requires that, for high-risk AI systems, training, validation, and test data:

Furthermore, the German Basic Law mandates equal treatment and prohibits discrimination based on personal characteristics.

For AI systems, this means specifically: They must operate in a way that is both representative and non-discriminatory.

This presents a key challenge: By nature, representative data contains minorities less frequently. At the same time, the quality for these groups must not be inferior. This tension cannot be resolved using traditional testing methods.

Traditional software tests examine deterministic systems. AI systems, on the other hand, are probabilistic models that learn probability distributions from data.

This has several consequences:

A system may exhibit good overall accuracy while performing significantly worse for certain subgroups.

This makes it clear: Bias in AI is a statistical problem—and must also be tested statistically.

To specifically identify and detect bias in AI, ITPower Solutions follows a structured testing approach that considers two central test objects:

The approach is divided into three steps.

First, a reference distribution is defined that describes the real-world deployment environment (ODD).

An obvious approach would be to derive this from the training data. However, this is unsuitable because:

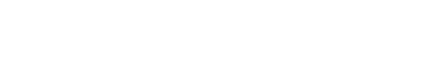

Instead, ITPower relies on modeling using ontologies. This involves systematically capturing relevant features and their relationships.

By extending these ontologies to include probabilities, a probabilistically extended ontology (PEON) is created. This allows for the definition of a robust reference distribution that is independent of the training dataset.

Based on the reference distribution, we verify whether the training data accurately reflects the actual distribution.

Among other things, the following aspects are analyzed:

Deviations from the reference distribution indicate a lack of representativeness and pose a potential risk of bias.

In the third step, the behavior of the AI system itself is examined.

Here, quality criteria are examined that can be derived from both regulatory requirements and technical specifications, for example:

Another methodological aspect is conditioning on specific features and edge cases. This means that the system’s performance is analyzed specifically with regard to certain features (e.g., origin).

Mathematically, this corresponds to the consideration of conditional probabilities, for example in the sense of Bayesian statistics.

A frequently underestimated aspect when testing AI systems is the statistical validation of the results.

To make statements about error rates and non-discrimination, sufficiently large test sets are required.

For example, a small number of tests can lead to seemingly good results without these being statistically robust. Significantly larger samples are necessary for high confidence levels.

This means: Demonstrating fairness is not only a question of methodology, but also of the depth of testing.

A key finding of this approach is that representativeness and non-discrimination are not mutually exclusive but must be analyzed separately.

Only through this separate analysis can the conformity of an AI system be demonstrated.

Bias in AI is an unavoidable challenge for data-driven systems—especially in sensitive application areas such as lending.

The combination of:

makes it clear that traditional testing methods are insufficient.

The approach pursued by ITPower Solutions demonstrates how bias can be systematically analyzed and controlled:

This not only identifies bias in AI but also makes it systematically and demonstrably controllable—a key prerequisite for the secure and compliant use of AI systems.

I am your sales representative and will be happy to advise you on all questions relating to our services and products! Get in touch or simply make an appointment for a free consultation call.

Sebastian Stritz

E-Mail: sebastian.stritz@itpower.de

Telefon: +49 (0)30 6098501-17